TINYML-ENABLED FRUGAL SMART OBJECTS: CHALLENGES AND OPPORTUNITIES - IOT CRAWLER

←

→

Page content transcription

If your browser does not render page correctly, please read the page content below

Feature

TinyML-Enabled

Frugal Smart Objects:

Challenges and

Opportunities

Ramon Sanchez-Iborra and Antonio F. Skarmeta

©ISTOCKPHOTO.COM/MONSITJ

4 IEEE CIRCUITS AND SYSTEMS MAGAZINE 1531-636X/20©2020IEEE THIRD QUARTER 2020

Authorized licensed use limited to: Universidad Politecnica de Cartagena. Downloaded on August 18,2020 at 08:22:56 UTC from IEEE Xplore. Restrictions apply.Frugal objects are independent “things” specialized

Abstract

in perform concrete operations that can be programmed

The TinyML paradigm proposes to integrate Machine Learning

(ML)-based mechanisms within small objects powered by Mi- with software rather than requiring specific electron-

crocontroller Units (MCUs). This paves the way for the develop- ics for each task, so they can be massively produced at

ment of novel applications and services that do not need the inexpensive cost. These devices incorporate enough

omnipresent processing support from the cloud, which is power

processing and memory resources, sensors, and con-

consuming and involves data security and privacy risks. In this

work, a comprehensive review of the novel TinyML ecosys- nectivity capabilities to perform the required operation,

tem is provided. The related challenges and opportunities are while being highly energy efficient. A concise definition

identified and the potential services that will be enabled by the of types of MCU-based devices according to their hard-

development of truly smart frugal objects are discussed. As a

ware resources is given in [8].

main contribution of this paper, a detailed survey of the available

TinyML frameworks for integrating ML algorithms within MCUs The penetration of frugal objects in our lives due to

is provided. Besides, aiming at illustrating the given discussion, the emergence of the Internet of Things (IoT) is skyrock-

a real case study is presented. Concretely, we propose a multi- eting, thus provisioning them with cognitive skills opens

Radio Access Network (RAT) architecture for smart frugal ob-

a plethora of opportunities for developing a widespread

jects. The issue of selecting the most adequate communication

interface for sending sporadic messages considering both the web of collective intelligence (Fig. 1) [9]. Different para-

status of the device and the characteristics of the data to be digms such as fog or edge computing are fueling this

sent is addressed. To this end, several TinyML frameworks are hyper-connected distributed scheme supported by the

evaluated and the performances of a number of ML algorithms

new wave of communication technologies, which permit

embedded in an Arduino Uno board are analyzed. The attained

results reveal the validity of the TinyML approach, which suc- coordinating end-devices efforts to place intelligence

cessfully enables the integration of techniques such as Neural close to the users [10]. Nevertheless, given the power

Networks (NNs), Support Vector Machine (SVM), decision trees, constraints of MCUs, reducing data transmissions is cru-

or Random Forest (RF) in frugal objects with constrained hard-

cial for their sustainability as this type of operations are

ware resources. The outcomes also show promising results in

terms of algorithm’s accuracy and computation performance. more power demanding than computational tasks [11].

In this line, much data captured by sensors are

wasted because of transporting costs, bandwidth limita-

I. Introduction tions, or power constraints. For those reasons, perform-

M

achine Learning (ML) has revolutionized our ing on-device ML processing is a buzz trend today. The

way of understanding the world by enabling the TinyML community [12] is boosting the integration of ML

inference of valuable information and knowledge within MCU-based smart frugal objects. In fact, forecasts

from huge amount of data, so far invisible to human eye. predict that the global edge computing market will reach

Data are produced by our digital activity and grow at al- 1.12 trillion dollars by 2023 [13] and some strong compa-

most unlimited rates [1], hence calling for large pools of nies such as Ericsson are already offering TinyML-as-a-

resources in terms of processing power and storage for Service solutions [14]. In this paper, we provide a compre-

their handling and analysis. In this regard, research and hensive overview of current challenges and opportunities

innovation advances during the last years have been

notable in multiple areas of study [2]–[5]. These efforts

have focused on obtaining a better response from the

wide range of available ML techniques, e.g., Deep Learn-

ing (DL), Neural Networks (NNs), Reinforcement Learning

(RL), clustering, etc., by exploiting the potential offered

by state-of-the-art processors, data-centers, and super-

computers [6]. However, a non-negligible segment of

processing entities has received scarce attention due to

their inherent computing constraints: Microcontroller

Units (MCUs). This tiny processing entities are usually

embedded in daily-use appliances such as vehicles, medi-

cal devices, personal gadgets, etc., but they may also con-

stitute the core processing unit of small frugal objects [7].

Digital Object Identifier 10.1109/MCAS.2020.3005467

Figure 1. Interconnected smart services.

Date of current version: 12 August 2020

Ramon Sanchez-Iborra and Antonio F. Skarmeta are with the University of Murcia, Murcia, Spain, (ramonsanchez@um.es; skarmeta@um.es).

THIRD QUARTER 2020 IEEE CIRCUITS AND SYSTEMS MAGAZINE 5

Authorized licensed use limited to: Universidad Politecnica de Cartagena. Downloaded on August 18,2020 at 08:22:56 UTC from IEEE Xplore. Restrictions apply.opened by this emerging hot-topic. A dissection of the ML algorithms within a MCU powered device, and the

potential services that will benefit from the introduction attained results. Finally, Section V summarizes the most

of ML-powered mechanisms into frugal objects is also important findings and draws future research lines.

presented. Currently available frameworks that per-

mit to embed ML schemes into MCUs are explored and II. Machine Learning in Frugal Objects

reviewed. Although some performance evaluations of The society digitalization achieved during recent times

certain frameworks have been reported [15], [16], to the has democratized the daily use of a plethora of frugal

authors’ knowledge there is not any prior work provid- objects, e.g., wearables, environmental data collectors,

ing a comprehensive survey focused on these valuable actuators, etc. The integration of intelligence within

tools. Besides, we elaborate on a specific use case which these small devices brings a series of advantages

is the intelligent communication interface selection in a that outweigh the possible cons. In the following, we dis-

constrained multi-Radio Access Technology (RAT) de- cuss these aspects aiming at exploring the possibilities

vice, by considering the system status as well the char- opened by TinyML from a realistic perspective.

acteristics of the data to be sent. To this end, an archi-

tectural design of the proposed frugal object and a real A. Benefits

on-device implementation of a ML-based decision-mak-

ing mechanism are finally showed. Therefore, the main 1) Energy Efficiency

contributions of this work are the following: This is the first positive point that comes to mind when adopt-

■■ A wide discussion of the TinyML concept as well ing MCU-based solutions. Whereas powerful processors and

as the range of potentially benefited applications. Graphics Processing Units (GPUs) demand great amount of

■■ A survey of the principal available frameworks for power, MCUs present a reduced energy consumption, even

integrating ML algorithms within MCUs. working at their maximum workload level. This permits

■■ Insights regarding the real implementation of MCUs to rely on batteries or even energy harvesting.

ML-based schemes over frugal objects to solve Therefore, smart frugal objects can be placed almost every-

the problem of intelligent interface selection in a where without the need of being plugged to the power grid,

multi-RAT set up. which opens the door for novel cognitive itinerant applica-

The rest of the paper is organized as follows. Sec- tions [17]. Furthermore, having a scarce power consumption

tion II provides a comprehensive overview of the TinyML allows the smart unit to be coupled with larger battery-pow-

landscape. Section III presents a survey of the available ered devices, hence converting them into connected smart

frameworks for integrating ML within frugal objects. entities, e.g., personal mobility devices such as scooters or

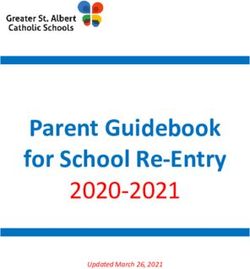

Section IV focuses on the specific use case of devices segways. Fig. 2 compares the main characteristics of devices

with multiple communication interfaces, presenting the powered either by microcontroller or microprocessor.

proposed architecture, the implementation process of

2) Low Cost

The simplicity and hardware constraints of frugal objects

Processing Power

High

leads to a moderate cost per unit. Clearly, this has been

one of the main reasons for the quick and fruitful exploi-

Simplicity Medium Memory

tation of IoT architectures in a large range of segments:

industry, agriculture, e-health, or entertainment, among

Low

others [18]. These deployments consist of a large number

of frugal objects specialized on performing varied tasks.

Low-Cost Storage

The heterogeneity of employed end-devices, as well as

their reprogrammability bring endless opportunities for

enriching current deployments with intelligence, hence

enabling their evolution into smart and flexible systems.

Portability Multi-RAT

3) System Reliability and Data Security

Energy Efficiency As stated above, wireless transmissions require much

Microcontroller-Based Device energy in comparison with processing tasks. This

Microprocessor-Based Device involves a real need for limiting communication activi-

ties in frugal objects. Although this could be seen as a

Figure 2. Feature comparison between MCU and micropro- negative aspect, it actually allows to increase system’s

cessor-powered devices.

reliability as well as data security and privacy. Big data

6 IEEE CIRCUITS AND SYSTEMS MAGAZINE THIRD QUARTER 2020

Authorized licensed use limited to: Universidad Politecnica de Cartagena. Downloaded on August 18,2020 at 08:22:56 UTC from IEEE Xplore. Restrictions apply.applications require to stream raw data over unpredict- sets are suitable for straight-through ML integration, which

able and lossy wireless channels from end-devices to the will permit an easy application development with the sup-

cloud. This strategy is bandwidth consuming and prone port of device-level software. In this regard, it is important

to transmission errors and cyber-attacks, e.g., man-in- that future firmwares could reach high reliability levels and

the-middle or eavesdropping, so that the transmitted tackle key aspects such as in-device data security and op-

data may be lost or compromised. These issues are timized transmission operations. In addition, cloud players

avoided by performing on-device data processing which should develop enhanced platforms and APIs to allow ef-

permits to perform fewer transmissions with aggregated ficient data exchanges between objects and cloud. Finally,

or meaningless data for an attacker. the adaptation of mainstream data-science frameworks

to MCU requirements is crucial for a wide expansion of

4) Latency TinyML. Not only programming libraries but also lifecycle

This is another advantage of performing local pro- management tools are needed to simplify solution develop-

cessing instead of offloading computing tasks onto the ment, data handling, etc. As discussed in Section III, a first

cloud. State-of-the-art IoT RATs, e.g., Low Power Wide wave of TinyML frameworks and developing tools is already

Area Network (LPWAN) [19], have notably improved available, which will foster the arrival of novel services and

energy efficiency and transmission distances at the applications to the market by attracting new players.

expense of reducing data-rate. This fact leads to very

long message’s times-on-air that add undesired delay C. Applications

to the whole operation. Besides, on-device computation Many IoT applications are already integrated in our daily

efficiency of complex ML algorithms is being deeply in- personal and professional activities, although many of

vestigated in order to achieve real-time execution and them are prone to be strengthened with local smart pro-

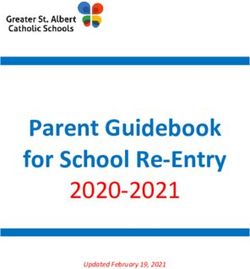

notable advances are being achieved [20]. cessing capabilities (Fig. 3). Most of current IoT devices

make an extensive use of network-related operations,

B. Challenges which is not always the best option as discussed previ-

Given the discussed advantages, integrating ML within ously. For instance, wearables such as fitness monitors or

frugal objects also presents important challenges to be “smart” watches are not that smart, as they rely on a Blue-

overcome. These are mostly related to device heteroge- tooth-coupled smartphone which assumes the majority

neity and MCU’s constraints. of processing tasks. Integrating ML within frugal objects

permits their independence from a master device, which

1) Device Heterogeneity will notably enrich current applications landscape.

Regarding this aspect, the enormous range of existing

devices is a notable obstacle for having a general set 1) eHealth

of TinyML tools that could be valid for most of MCU- This is one of the segments with greater growth potential.

based set-ups. These units may present different power Health monitoring, visual assistance, hearing aids, per-

consumption, processing capabilities, memory, storage sonal sensing, activity recognition, etc., are just few ex-

capacity, embedded RATs, peripherals, communication amples of applications that will exploit truly-smart end-

ports and protocols, etc., which also hinders the design devices. Beyond the health-care perspective, augmented

of generic benchmarking methodologies in TinyML sys- reality, real-time voice recognition, language transla-

tems [21]. Therefore, the implementation of universal tion, context-aware support systems, or precise indoor/

development and benchmarking frameworks coping outdoor positioning are other wearable-based services

with this heterogeneity is not an easy task although it is to come in a near future [23].

highly necessary for increasing awareness and adoption.

2) Smart Spaces

2) MCU Constraints ML-enabled frugal objects will also contribute to the

So far computation and memory constraints have not been collective intelligence already present in scenarios such

a limitation given the quantity and quality of available re- as smart cities or cognitive buildings, among many

sources in modern workstations and data centers. Howev- others [24]. Current IoT-based monitoring and surveil-

er, up-scaling computing system’s resources according to lance systems, e.g., traffic, pollution, crowdsensing, etc.,

the processing needs of ML mechanisms is not a valid ap- will evolve to smart and autonomous entities capable of

proach for MCUs [22]. For that reason, TinyML ecosystem making decentralized and quick decisions. The deploy-

players should coordinate effective efforts for achieving a ment simplicity and independence from the power grid al-

functional convergence between ML and frugal objects. In low things to be placed in remote and rural areas, hence

this line, MCU manufacturers should ensure that their chip- creating smart spaces with seamless roaming among them

THIRD QUARTER 2020 IEEE CIRCUITS AND SYSTEMS MAGAZINE 7

Authorized licensed use limited to: Universidad Politecnica de Cartagena. Downloaded on August 18,2020 at 08:22:56 UTC from IEEE Xplore. Restrictions apply.4) Industry 4.0

The potential impact of TinyML is

enormous in industrial and manu-

facturing sectors as they are expe-

Smart City

riencing a digitalization process

under the umbrella of the Industry

4.0 revolution [28]. The processing

tasks in these fields are highly het-

erogeneous in terms of computing

eHealth Environmental or memory requirements, which

Monitoring causes that MCUs are not always ca-

pable of running certain tasks. How-

ever, the integration of ML-based

Decision Support Systems (DSSs)

within MCUs may permit them to

determine whether to assume a cer-

tain computation task or offloading

Industry and it onto upper processing layers such

Smart Home

Business as edge or cloud [29]. Besides, the

refinement of production process-

es, decision making, asset tracking,

etc. will be driven by the integration

Vehicular of intelligence along the production

chain as well.

5) Smart Agriculture

and Farming

Figure 3. TinyML ecosystem.

These sectors are also under a

deep digital transformation [30]. By

[25]. Besides, the reduced cost of end-devices will also fos- adopting ML-based smart monitoring and control, both of

ter their adoption in disadvantaged areas, which may help them will increment the efficiency and health of crops and

to revamp local economy and business activities [26]. animals, which will lead to greater revenues due to the im-

provement of production quality. Finally, common daily ac-

3) Vehicular Services tivities and home appliances will also reach the next level

Thanks to the alliance between ML and MCUs, the vehicu- with the support of ML-enabled devices. For example, bio-

lar market and, concretely, the Cooperative—Intelligent metric e-locks will be generalized as well as context-aware

Transportation Systems (C-ITS) will be enriched with a seg- domotic systems and wellbeing applications. Intelligent

ment of vehicles that has been ignored so far, namely, per- pet trackers and feeders, smart garden-irrigation control,

sonal mobility devices. Urban mobility trends are changing or advanced safety & security home systems, are other

during the last times due to the emergence of sustainable use cases that will be enabled thanks to the upcoming

and healthy vehicles such as shared bikes, electric mo- TinyML revolution [31].

peds, scooters, etc. However, current vehicular networks

just consider traditional road transportation means, i.e., III. Tinyml Frameworks

cars, buses, trucks, etc., as their equipped On-Board Units Given the great potential of TinyML to revolutionize mul-

(OBUs) require great amount of power to operate. Although tiple sectors, a number of libraries and tools for easing

some efforts have been done for connecting motorcycles to the implementation of ML algorithms on constrained plat-

C-ITS infrastructures [27], personal vehicles have not been forms are available in the market. TinyML frameworks

considered due to their novelty and inherent constraints. can be categorized into three different families. The most

However, coupling these devices with MCU-based OBUs common approach relates to the conversion of already

paves the way for their integration within the C-ITS and trained models for adapting them to the restrictions of

smart-city ecosystems. Therefore, light vehicles will have MCUs. Thus, these tools usually take inference engines

access to basic vehicular services such as driving safety, generated by well-known ML libraries such as TensorFlow

device status monitoring, route planning, etc. [32], Scikit-Learn [33], or PyTorch [34], among others,

8 IEEE CIRCUITS AND SYSTEMS MAGAZINE THIRD QUARTER 2020

Authorized licensed use limited to: Universidad Politecnica de Cartagena. Downloaded on August 18,2020 at 08:22:56 UTC from IEEE Xplore. Restrictions apply.and port their code in order to be executed by scarce ect compatible with existing ML frameworks such as Ten-

resource devices. The second approach bets on integrat- sorFlow and Caffe [57], and the ONNX format. As ARM-NN

ing ML libraries within MCUs, hence providing them with does not provide support to the Cortex-M CPUs, ARM has

local training and analysis capabilities. This permits to also released the Cortex Microcontroller Software Inter-

generate models from data retrieved by the own device, face Standard-NN (CMSIS-NN) [42], which is a collection of

which may improve the accuracy of the model and also NN kernels optimized to be executed on this processor se-

enables the implementation of unsupervised learning al- ries. CMSIS-NN is able to convert floating point-based NNs

gorithms. Finally, the last approach relies on the availabil- generated by popular libraries, e.g., TensorFlow, PyTorch,

ity of a dedicated co-processor that supports the main and Caffe, into fixed-point format that can run on Cortex-M

computing unit on ML-specific tasks. This strategy per- devices. Another electronics manufacturer that has devel-

mits to enhance the computation performance, although oped specific libraries for its devices is STMicroelectron-

it is the less common approach as the price and complex- ics. Concretely, the STM32 Cube.AI toolkit [43] permits

ity of the processing platforms are notably increased. to integrate pre-trained NNs within STM32 ARM Cortex-

Big technological companies are promoting the expan- M-based microcontrollers. It generates STM32-compatible

sion of the TinyML ecosystem by offering open-source C code from NN models generated by Tensorflow and

development libraries. Google has launched TensorFlow Keras [58], or in the standard ONNX format. As an inter-

Lite [35], which includes a set of tools to adapt Tensor- esting feature, STM32Cube.AI permits to run large NNs by

Flow models aiming at making them to be runnable in mo- storing weights and activation buffers in external flash

bile and embedded devices. This framework is composed memory and RAM, respectively.

of two main elements, namely, the converter, which ports Besides open-source initiatives, some institutions

TensorFlow models to optimized code that can be execut- and companies have also launched licensed products.

ed in constrained platforms, and the interpreter, which The Fraunhofer Institute for Microelectronic Circuits and

actually runs the code generated by the converter. The Systems (IMS) has developed the Artificial Intelligence

optimized models, which cover a number of algorithms for Embedded Systems (AIfES) library [44], which is a

from the NN family, can be executed in a range of plat- C-based and platform-independent tool for generating

forms such as smart-phones, embedded Linux and MCUs. NNs compatible with a range of MCUs, e.g., Arduino UNO,

Focusing on the latter, the optimized code is provided in ATMega32U4, or STM32 F4 Series. It can also produce ef-

C++ 11 and requires 32-bit processors to be run. It has ficient code for Windows in the form of Dynamic Link Li-

been successfully tested in devices with ARM Cortex-M brary (DLL) and Linux platforms such as Raspberry Pi.

series processors, e.g., Arduino nano, and other architec- In its current version, it is able to extracts all parameters

tures such as ESP32. Given the notoriety of Arduino, a from models produced by TensorFlow or Keras. Differ-

specific library for this platform is available through its ent from the frameworks reviewed above, AIfES permits

IDE. Besides the development of TensorFlow Lite, another to implement the NN training process on the embedded

related Google’s initiative has been the adaptation of the device, i.e., it belongs to the second family of frameworks

GO programming language to microcontrollers [36]. In identified above. Cartesiam’s NanoEdge AI Studio [45] is

turn, Microsoft has also contributed to the TinyML scene another commercial product which permits to create ML

by releasing its open source Embedded Learning Library static libraries to embed them in Cortex-M-based MCUs.

(ELL) [37]. This framework permits to design and deploy Similar to AIfES, it allows to integrate the training process

pre-trained ML models onto constrained platforms, e.g., within the constrained device but, in addition, it also

ARM Cortex-A and Cortex-M architectures such as Ardu- permits to run unsupervised learning algorithms on the

ino, Raspberry Pi, and micro:bit. ELL can be understood MCU. As a part of this toolkit, a microcontroller emula-

as an optimizing cross-compiler that runs on a regular tor is provided in order to test and debug the generated

desktop computer and outputs C++ code ready to be ex- model prior to its deployment on the device.

ecuted on the targeted single-board computer. ELL’s API Surprisingly, all the frameworks presented so far only

can be employed either from C++ or Python and makes focus on a single type of ML algorithm, namely, NN. How-

use of pre-trained NN models produced by the Microsoft ever, different initiatives released by makers, developers,

Cognitive Toolkit (CNTK) [38], Darknet [39], or in Open and researchers also consider other ML techniques such

Neural Network Exchange (ONNX) format [40]. as Naive Bayes classifier, decision trees, k-Nearest Neigh-

ARM is also pushing for the integration of ML in their bors (k-NN), among others. MicroML [46] is a project that

devices. The ARM-NN toolkit [41] is part of Linaro’s ML permits to port Support Vector Machine (SVM) and Rel-

Initiative [56] and allows to integrate NN workloads within evance Vector Machine (RVM) algorithms to C code that

ARM Cortex-A CPUs, ARM Mali GPUs, and Ethos Neural can run in a number of MCUs, e.g., Arduino, ESP8266,

Processing Units (NPUs). ARM-NN is an open-source proj- ESP32, and others with C support. It interoperates with the

THIRD QUARTER 2020 IEEE CIRCUITS AND SYSTEMS MAGAZINE 9

Authorized licensed use limited to: Universidad Politecnica de Cartagena. Downloaded on August 18,2020 at 08:22:56 UTC from IEEE Xplore. Restrictions apply.widely-used Scikit-learn toolkit and transforms the mod- Table I summarizes the main characteristics of the

els generated by this library in order to be executed on frameworks reviewed in this section.

8-bit microcontrollers with 2 kb of RAM. Also compatible

with Scikit-learn, sklearn-porter [47] permits to transpile IV. Use Case: Smart Multi-Rat Device

trained estimators to C, Java, JavaScript, GO, Ruby, and Most of the latest efforts related to the evolution of the

PHP. Given this variety of supported languages, it is a very IoT ecosystem have focused on the development of novel

complete framework as it is also compatible with a range communication technologies and protocols to provide

of ML algorithms. Specifically for C language, sklearn- end-devices with ubiquitous connectivity. Thus, it is com-

porter is able to port Scikit-learn models of SVM, decision mon to find MCU-based objects with a variety of integrat-

trees, Random Forest (RF), and AdaBoost classifier. How- ed RATs, which present different characteristics in terms

ever, the versatility of this library hinders it to generate of data-rate, latency, energy consumption, etc. As dis-

microcontroller-optimized code in terms of required RAM. cussed previously, a convenient management of commu-

A similar tool is m2cgen [48], which is also able to transpile nication activities is crucial for ensuring the sustainability

Scikit-learn-trained models into a native code, e.g., Python, of the end-device; besides, the Quality of Service (QoS)

C, Java, Go, JavaScript, Visual Basic, etc. In this case, both requirements of data traffic should be taken into account

the number of compatible algorithms as well as the target as well. For those reasons, we propose the application of

programming languages are even greater than those sup- ML techniques to smartly decide which is the most ad-

ported by sklearn-porter. A more limited tool is Weka-por- equate communication interface for transmitting a given

ter [49], which coverts decision tree classifiers generated message by considering both the status of the device and

by WEKA [59] into C, Java and JavaScript codes. EmbML the characteristics of the message to be sent. Adopting a

[50] is a library that permits to convert models trained by ML approach provides flexibility to the interface selection

WEKA or Scikit-learn into C++ source-code files that can mechanism in the case of adding new communication in-

be compiled and executed in constrained platforms. This terfaces or removing some of the existing ones. Thus, the

library has been tested in Arduino and Teensy [60] boards multi-RAT frugal object is enriched with awareness capa-

and it supports different ML algorithms such as decision bilities unavailable for current IoT devices. Aiming at pro-

trees, SVM, and NN. viding insights regarding the design and implementation

emlearn [51] is a framework that produces portable C99 processes, firstly we propose and describe a multi-RAT

code from Python libraries such as Scikit-learn or Keras. smart object architecture and, then, we explore the inte-

This library is compatible with generated models of a range gration process of TinyML-based algorithms on real hard-

of algorithms, e.g., RF, decision trees, Naive Bayes, or linear ware in order to tackle the mentioned decision problem.

models, and has been tested in different platforms, namely,

AVR Atmega, ESP8266 and Linux. Focused on extending the A. Architecture

compatibility of TensorFlow models, uTensor [52] and Ti- The proposed architecture is shown in Fig. 4. The core

nyMLgen [53] are two libraries that produce C code ready element of the device is the MCU which gathers the pro-

to be integrated within different families of MCUs. While the cessing unit as well as program and data memories. In this

former is focused on Mbed boards [61], the latter exports regard, we consider constrained processing platforms as

the trained model to a plain C array, hence ready to be used those described in [8]. Focusing on storage, it is usual to

in a wider range of microcontrollers. find commercial devices with SD-card m odules to expand

Finally, two proposals from the Academia are focused their capacity, so this is not a big limitation in current de-

on optimizing the implementation of different types of NNs velopment boards. As observed in the diagram, the device

on ARM Cortex-M processors. CMix-NN [54] is an open- is also equipped with a series of embedded sensors as well

source mixed-precision library for quantized NNs deploy- as digital/analog pins for enabling the connection of exter-

ment on MCUs. This tool supports convolutional kernels nal elements. To this end, a range of communication proto-

with precision of 2, 4, or 8 bits, for any of the operands. cols are frequently employed in MCUs, e.g., Universal Asyn-

In their work, authors made use of the convolutional NN chronous Receiver/Transmitter (UART), Serial Peripheral

models produced by MobileNets [62]. In turn, FANN-on- Interface (SPI), or Inter-Integrated Circuit (I2C). Although

MCU [55] is another open-source framework which is built displays are highly consuming, they are useful to show im-

upon the Fast Artificial Neural Network (FANN) library [63] portant events or status messages. Besides, recently small

aiming at running lightweight NNs on microcontrollers. e-Paper displays have arrived to the MCU market, which

Concretely, this toolkit is able to process Multi-Layer Per- present insignificant energy consumption levels [65].

ceptrons (MLPs) trained with FANN and produces code The availability of multiple RATs embedded in the de-

that, besides the mentioned ARM Cortex-M processor, can vice is also one of its key characteristics. WiFi or Blue-

run on Parallel Ultra-Low Power Platforms (PULP) [64]. tooth are the most usual technologies as they are very

10 IEEE CIRCUITS AND SYSTEMS MAGAZINE THIRD QUARTER 2020

Authorized licensed use limited to: Universidad Politecnica de Cartagena. Downloaded on August 18,2020 at 08:22:56 UTC from IEEE Xplore. Restrictions apply.Table I.

TinyML frameworks.

Interoperable

Compatible Output External Publicly

Framework Algorithms Platforms Languages Libraries Available Main Developer

TensorFlow Neural ARM Cortex-M C++ 11 TensorFlow Yes Google

Lite [35] networks

ELL [37] Neural ARM Cortex-M C CNTK Yes Microsoft

networks ARM Cortex-A C++ Darknet

Arduino ONNX

micro:bit

ARM-NN [41] Neural ARM Cortex-A C TensorFlow Yes ARM

networks ARM Mali Caffe

Graphics ONNX

Processors

ARM Ethos

Processor

CMSIS-NN Neural ARM Cortex-M C99 TensorFlow Yes ARM

[42] networks Caffe

PyTorch

STM 32Cube. Neural STM32 C Keras Yes STMicroelectronics

AI [43] networks TensorFlow

Lite Caffe

ConvNetJs

Lasagne

AIfES [44] Neural Windows (DLL) C TensorFlow No Fraunhofer IMS

networks Raspberry Pi Keras

Arduino

ATMega32U4

STM32 F4

Series

ARM Cortex-M4

NanoEdge Unsupervised ARM Cortex-M C — No Cartesiam

AI Studio [45] learning

MicroMLGen SVM Arduino C Scikit-learn Yes Particular

[46] RVM ESP32 developer

ESP8266

sklearnporter SVM Multiple C Scikit-learn Yes Particular

[47] Decision tree constrained & GO developer

Random Forest non- Java

Ada Boost constrained JavaScript

Classifier platforms PHP

k-NN Ruby

Naive Bayes

Neural

networks

m2cgen [48] Linear Multiple C Scikit-learn Yes Particular

regression constrained & C# developer

Logistic non- Dart

regression constrained Go

Neural platforms Java

networks JavaScript

SVM PHP

Decision tree PowerShell

Random Forest Python

LGBM R

Classifier Visual Basic (Continued )

THIRD QUARTER 2020 IEEE CIRCUITS AND SYSTEMS MAGAZINE 11

Authorized licensed use limited to: Universidad Politecnica de Cartagena. Downloaded on August 18,2020 at 08:22:56 UTC from IEEE Xplore. Restrictions apply.Table I.

TinyML frameworks. (Cotinued)

Interoperable

Compatible Output External Publicly

Framework Algorithms Platforms Languages Libraries Available Main Developer

weka-porter Decision trees Multiple C Weka Yes Particular

[49] constrained & Java developer

non- JavaScript

constrained

platforms

EmbML [50] Decision trees Arduino C++ Scikit-learn No Research group

Neural Teensy Weka

networks

SVM

emlearn [51] Decision trees AVR Atmega C Keras Yes Particular

Neural ESP8266 Scikit-learn developer

networks Linux

Naive Gaussian

Bayes

Random forest

uTensor [52] Neural mBed boards C++11 TensorFlow Yes Particular

networks developer

TinyMLgen Neural ARM Cortex-M C TensorFlow Yes Particular

[53] networks ESP32 Lite developer

CMix-NN [54] Neural ARM Cortex-M C Mobilenet Yes Research group

networks

FANN-on- Neural ARM Cortex-M C FANN Yes Research group

MCU [55] networks PULP

TinyML

Sensors Storage

Inertial

Weather MC

+

Health Memory RAT 1

... ...

GPS

Display RAT

... n

I/O

Ports

Battery APPS

Figure 4. Multi-RAT smart object architecture.

12 IEEE CIRCUITS AND SYSTEMS MAGAZINE THIRD QUARTER 2020

Authorized licensed use limited to: Universidad Politecnica de Cartagena. Downloaded on August 18,2020 at 08:22:56 UTC from IEEE Xplore. Restrictions apply. ell-known standards that can reach high data-rates.

w ing the device status, several aspects are considered. The

Other options based on cellular communications such as percentage of remaining battery (B) is one of the key factors

4 G/5G may be also considered. However, all these RATs to evaluate as the power consumption of both interfaces

require great amount of energy to work. For that reason, clearly differs. The coverage level (C) of each RAT {c1, c2},

LPWAN-based solutions such as LoRaWAN, Sigfox, or is also taken into account in order to avoid transmissions

Narrow Band—Internet of Things (NB-IoT) [19] are cur- over a lossy link. Besides, LoRaWAN makes use of license-

rently being adopted by the IoT market as they provide free bands, e.g., Industrial, Scientific and Medical (ISM), so

very long transmission distances with highly reduced en- the access to this wireless medium is normally limited by

ergy consumption, although with the limitation of severely international regulations. Thus, we model the availability

limiting the transmission rate. Given the heterogeneous of the LoRaWAN interface with the boolean feature L. We

characteristics of the available RATs, it is crucial to precise- consider that NB-IoT does not present this restriction as it

ly select the most adequate technology to guarantee both makes use of licensed spectrum. We also assume that there

the energy efficiency of the device as well as the QoS of are not dedicated queues for each interface; instead, we

the transmitted packets. At this point, TinyML comes into consider a One in—One out message-forwarding system.

play with the aim of smartly supporting this decision. As This assumption is made given the memory constraints of

discussed in next Section, a number of ML techniques may MCUs, which prevent them of storing great amount of data

be employed to this end, thanks to the algorithm adapta- in RAM. Two message features are also evaluated in the de-

tions provided by the afore-reviewed TinyML frameworks. cision process. The data size (S), in bytes, is considered as

This multi-R AT smart board can be integrated LoRaWAN has a maximum payload length of 240 B. Finally,

within a plethora of devices and gadgets, e.g., vehicles, the urgency level of the message (U) is also contemplated

wearables, sensors, robots, etc., which will be enriched with the aim of supporting different classes of services.

with intelligence and communication capabilities. These With the features identified above, the current

elements are connected to the cloud or other devices system status is defined by the following vector

through the available communication interfaces in or- (B, " c 1, c 2 ,, L, S, U ), which is employed for determining

der to gain access to a range of next-generation services, the most adequate action A ! " a 0, a 1, a 3 , . We tackle this

which are finally accessed by me a n s of e nd - u se r’s issue as a supervised multi-class classification problem.

personal devices such as smartphones or tablets. To this end, a dataset has been generated to train a num-

ber of algorithms that have been embedded in a real con-

B. Problem Statement strained device as explained in the following.

We consider the problem of intelligently selecting the most

adequate communication interface in a multi-RAT set up C. Dataset

when certain data is generated to be transmitted in a non- A synthetic training dataset of 10,000 samples, i.e., fea-

connection-oriented fashion. Concretely, given the architec- tures vectors, has been generated and each of the

ture presented above, we consider an end-device producing features composing the input vectors has received a

sporadic messages and that is equipped with two commu- value randomly calculated. The values for the message

nication interfaces, namely, LoRaWAN and NB-IoT. As both urgency (U) and the coverage of each RAT (c1, c2) have

of them are LPWAN-based solutions, they provide long been generated by assigning them uniformly distribut-

range communications with reduced power consumption, ed values in the range [0%, 100%]. The values for the

which are highly valued characteristics for moving devices. device’s remaining battery (B) and the packet size (S),

However, given these common general attributes, as shown have been randomly calculated in the ranges [1%, 100%]

in Table II, both present unique characteristics in terms of and [50 bytes, 500 bytes], respectively, following a uni-

bandwidth, uplink/downlink latency, duty-cycle, etc. There- form distribution as well. Finally, the LoRaWAN interface

fore, by considering these RAT features as well as the device availability (L), which was modeled as a boolean input,

status and the message characteristics, it should be decid- has received true/false values uniformly distributed.

ed whether to transmit a message or not, and if so, the most Each of the 10,000 samples has been labelled with the

adequate communication interface should be selected. most adequate action considering its input features. To

The set of all possible ac-

tions (A) is {a0, a1, a2}, where Table II.

a0 represents the action of Main characteristics of RATs under consideration.

dropping the message and {a1, RAT Throughput Latency RX Sensitivity Power Consumption

a2} indicates sending the data

LoRaWAN Low High High Low

using LoRaWAN or NB-IoT in-

terfaces, respectively. Regard- NB-IoT High Low Medium Medium

THIRD QUARTER 2020 IEEE CIRCUITS AND SYSTEMS MAGAZINE 13

Authorized licensed use limited to: Universidad Politecnica de Cartagena. Downloaded on August 18,2020 at 08:22:56 UTC from IEEE Xplore. Restrictions apply.this end, some hard restrictions have been established mized code in terms of memory allocation. We proved this

in order to select a communication interface for sending fact by porting to C code a number of models produced by

a given message (Table III). With these restrictions and Scikit-learn, namely, RF, decision trees, and SVM. In every

considering the characteristics of each RAT (Table II), we case, m2cgen generated non-compilable code for Arduino

have developed an automatic labelling scheme as follows. Uno due to unallowed memory and object manipulations.

LoRaWAN interface is preferred for small packets with low MicroMLGen is specialized in translating Scikit-

urgency, given its highly reduced power consumption. Nev- learn’s SVM models to Arduino-like platforms. It is able

ertheless, it is not the best option for transmitting urgent to handle different SVM kernels such as linear, polyno-

packets because of its limited throughput and the long la- mial, and Radial Basis Function (rbf). SVMs are highly

tency introduced. In turn, NB-IoT interface is more suitable sensitive to the number of training samples as they de-

for transporting urgent messages, given its lower latency termine the number of generated support vectors which

and higher data-rate. However, its more limited sensitivity have a direct impact on the required memory. Table IV

causes that its usage is not recommended with a coverage summarizes the attained results in terms of accuracy

level below 10%. The same applies to the battery level, and F1-score provided by Scikit-learn as well as program

when the remaining energy is below 10%, using the NB-IoT memory footprint and classification time in Arduino

interface is discarded due to its higher power consump- Uno. Regarding the latter, we present the average run-

tion. When one of these situations happen, short urgent ning time for 100 random input vectors as well as the

packets are sent via the LoRaWAN interface if it is available confidence interval ^a = 0.05 h . The alpha SVM param-

(L = true). Of course, considering the mentioned restric- eter has been fixed to 0.0001. It can be seen how the clas-

tions, some combinations of the input features may lead to sifier accuracy is improved by increasing the number of

the decision of dropping messages. training samples, no matter the kernel employed. The lin-

ear kernel is the fastest one and shows better accuracy

D. TinyML integration and evaluation than the rbf kernel, which presents the greatest memory

Given the notoriety and mass adoption of Arduino Uno, footprint. Observe that both of them surpass the maxi-

this board has been chosen as the testbench for evaluat- mum MCU’s program memory when employing 1000 and

ing the performance of TinyML frameworks. We consider 1250 training e xamples due to the large number of gen-

it as a good candidate for conducting this benchmarking erated support vectors. Finally, the polynomial kernel is

due to its processing and memory constraints, as it is the most accurate one and also presents the most mod-

equipped with an 8 bit processor at 16 MHz, flash mem- erate memory footprint. However, it is the slowest kernel

ory of 32 KB, and 2 kB of Static RAM (SRAM). According although always under the threshold of 200 ms.

to the device classification defined in [8], this platform sklearnporter has been evaluated when dealing with

belongs to the most constrained type of MCUs (Class 0). SVMs as well. However, the produced C code from the

Taking a look at Table I, the only publicly available Scikit-learn’s models is not optimized for constrained

frameworks that can produce code for Arduino Uno devices as mentioned previously, hence it provoked an

are MicroMLGen [46], sklearnporter [47], m2cgen [48], unstable behaviour of the Arduino unit. Given the incon-

and emlearn [51]. The workflow followed by these tools sistent performance obtained, we could not collect reli-

is similar in all of them: firstly, a selected algorithm is able outcomes to present here.

trained using a Python-based ML library; thereafter, the As discussed above, the family of NN-based algo-

model generated by this library is ported to C code by rithms is the one that has received greater attention

the corresponding TinyML translation tool; finally, the from the TinyML community. In this case, we have

produced code is integrated within an Arduino sketch evaluate the performance of the MLP implementation

by importing it as an external library. provided by the emlearn framework. We have employed

Regarding m2cgen, as discussed above, although this Scikit-learn for producing trained networks with differ-

framework is highly versatile as it supports many algo- ent number of hidden layers and neurons per layer, us-

rithms and output languages, it does not generate opti- ing the sigmoid activation function. As the number of

Table III.

Feature restrictions for generating the dataset.

Battery Coverage (C) LoRaWAN Packet Size (S) Urgency (U)

RAT Level (B) (%) (%) Availability (L) (bytes) (%)

LoRaWAN >5 >5 True 10 — — —

14 IEEE CIRCUITS AND SYSTEMS MAGAZINE THIRD QUARTER 2020

Authorized licensed use limited to: Universidad Politecnica de Cartagena. Downloaded on August 18,2020 at 08:22:56 UTC from IEEE Xplore. Restrictions apply.training samples does not impact on the weight of the for training and 20% for evaluating the produced classifier.

produced model, the 80% of the dataset has been em- Observe how these algorithms capture the logic employed

ployed for training and the other 20% for test. The ob- for labelling our synthetic dataset, which is evidenced by

tained outcomes are shown in Table V. It can be seen the good accuracy obtained. By increasing the depth of the

that in the MLP case the limiting factor is the Arduino decision tree, we improve the classifier performance up to

Uno’s SRAM, as the network’s weights and biases are a perfect accuracy of 1 with decision trees of 6 levels. Re-

stored there. Thus, when the number of neurons per lay- garding the RF classifier, we have evaluated one model con-

er is 10, the maximum number of hidden layers for not sisting of 10 estimators (trees) and we also obtain a perfect

consuming all the unit’s SRAM is 3. Regarding the algo- accuracy although the memory footprint and processing

rithm’s performance, a trade-off between accuracy and time are notably greater for this scheme. Comparing both

network complexity is evidenced. However, employing frameworks, sklearnporter provides faster and lighter deci-

one hidden layer with 4 neurons permits an accuracy of sion trees in comparison with emlearn. However, the oppo-

0.97, which is the best figure for this simple configura- site behaviour is obtained for the RF algorithm.

tion. It is clear the direct relationship between the over- In the light of the obtained results, the performance

all number of neurons in the network and the process- of decision trees and RF are notably better than that

ing time for each classification operation, which should of SVM and MLP for solving the intelligent communica-

be also considered when selecting the most adequate tion interface selection issue under consideration. They

system configuration. However, observe that the times achieve greater accuracy with reduced memory foot-

employed by the MLP classifier are notably shorter than print and computation delay. Therefore, for the specific

those needed by the MicroMLGen’s SVMs. The achieved case of smart multi-RAT configuration management,

accuracy is also better for the MLP algorithm. this family of algorithms would be the most adequate to

The adaptation of other well-known ML mechanisms be integrated within the MCU.

such as decision trees and RF has been evaluated as well. To sum up, it can be concluded that great advances have

To this end, we have employed the sklearn-porter and em- been made so far for integrating ML algorithms within fru-

learn frameworks to port the models generated by Scikit- gal devices. These achievements were unimaginable few

learn. In this case, sklearnporter produced suitable code time ago and they pave the way for the arrival of smart ap-

for Arduino Uno, given that decision trees and RF are light- plications and services that will not require a continuous

er than SVMs. A similar performance analysis to those processing support from the cloud. However, we are just in

presented previously is shown in Table VI. The dataset the inception of this movement and more efforts are need-

has been split as in the case of MLP evaluation, i.e., 80% ed for the integral development of the TinyML ecosystem.

Table IV.

SVM generated by MicroMLGen.

Samples/Kernel Linear Polynomial rbf

250 Accuracy = 0.7816 Accuracy = 0.7992 Accuracy = 0.7355

F1 = 0.7119 F1 = 0.7643 F1 = 0.6298

Memory = 10678 B Memory = 10264 B Memory = 12172 B

Time = 11.1±0.2 ms Time = 73.7±0.3 ms Time = 34.7±0.03 ms

500 Accuracy = 0.7871 Accuracy = 0.8552 Accuracy = 0.7472

F1 = 0.7161 F1 = 0.8074 F1 = 0.6661

Memory = 17782 B Memory = 15636 B Memory = 20574 B

Time = 22.3±0.1 ms Time = 73.2±0.7 ms Time = 72±0.047 ms

750 Accuracy = 0.7922 Accuracy = 0.8561 Accuracy = 0.75

F1 = 0.723 F1 = 0.8347 F1 = 0.6815

Memory = 25298 B Memory = 20642 B Memory = 26644 B

Time = 34.1±0.2 ms Time = 107.2±0.3 ms Time = 98.9±0.07 ms

1000 Accuracy = 0.7921 Accuracy = 0.8688 Accuracy = 0.7757

F1 = 0.7232 F1 = 0.8443 F1 = 0.7402

Memory = 30156 B Memory = 25530 B Memory = 35184 B

Time = 41.8±0.2 ms Time = 140.1±0.2 ms (out-of-range)

1250 Accuracy = 0.7939 Accuracy = 0.8757 Accuracy = 0.7818

F1 = 0.725 F1 = 0.845 F1 = 0.7484

Memory = 37930 B Memory = 31968 B Memory = 44058 B

(out-of-range) Time = 183.6±0.4 ms (out-of-range)

THIRD QUARTER 2020 IEEE CIRCUITS AND SYSTEMS MAGAZINE 15

Authorized licensed use limited to: Universidad Politecnica de Cartagena. Downloaded on August 18,2020 at 08:22:56 UTC from IEEE Xplore. Restrictions apply.Table V.

MLP generated by emlearn.

Hidden

layers/

Neurons 2 4 6 8 10

1 Accuracy = 0.8835 Accuracy = 0.9735 Accuracy = 0.961 Accuracy = 0.9705 Accuracy = 0.968

F1 = 0.8393 F1 = 0.9737 F1 = 0.9605 F1 = 0.9701 F1 = 0.9679

Memory = 6750 B Memory = 6830 B Memory = 6910 B Memory = 6990 B Memory = 7070 B

SRAM = 392 B SRAM = 472 B SRAM = 552 B SRAM = 648 B SRAM = 744 B

Time = 1.9+0.006 Time = 2.7+0.009 Time = 3.5+0.01 Time = 4.3+0.01 Time = 4.9+0.03

ms ms ms ms ms

2 Accuracy = 0.8045 Accuracy = 0.907 Accuracy = 0.9544 Accuracy = 0.9742 Accuracy = 0.9691

F1 = 0.7319 F1 = 0.878 F1 = 0.9541 F1 = 0.9742 F1 = 0.9691

Memory = 6790 B Memory = 6926 B Memory = 7094 B Memory = 7294 B Memory = 7526 B

SRAM = 430 B SRAM = 566 B SRAM = 734 B SRAM = 950 B SRAM = 1198 B

Time = 2.4+0.005 Time = 3.8+0.009 Time = 5.5+0.01 Time = 7.3+0.02 Time = 9.2+0.02

ms ms ms ms ms

3 Accuracy = 0.8903 Accuracy = 0.9746 Accuracy = 0.9543 Accuracy = 0.9705 Accuracy = 0.9795

F1 = 0.8431 F1 = 0.9745 F1 = 0.9532 F1 = 0.9709 F1 = 0.9797

Memory = 6826 B Memory = 7018 B Memory = 7274 B Memory = 7594 B Memory = 7978 B

SRAM = 468 B SRAM = 660 B SRAM = 916 B SRAM = 1252 B SRAM = 1652 B

Time = 2.9+0.01 Time = 5+0.01 ms Time = 7.4+0.02 Time = 10.1+0.02 Time = 13.2+0.03

ms ms ms ms

4 Accuracy = 0.888 Accuracy = 0.922 Accuracy = 0.924 Accuracy = 0.945 Accuracy = 0.944

F1 = 0.8392 F1 = 0.9242 F1 = 0.9238 F1 = 0.9453 F1 = 0.9422

Memory = 6864 B Memory = 7112 B Memory = 7456 B Memory = 7896 B Memory = 8432 B

SRAM = 506 B SRAM = 754 B SRAM = 1098 B SRAM = 1154 B SRAM = 2106 B

Time = 3.6+0.009 Time = 6.2+0.03 Time = 9.3+0.03 Time = 13+0.06 ms (out-of-range)

ms ms ms

5 Accuracy = 0.715 Accuracy = 0.882 Accuracy = 0.9285 Accuracy = 0.931 Accuracy = 0.9245

F1 = 0.6108 F1 = 0.8385 F1 = 0.9269 F1 = 0.9297 F1 = 0.9183

Memory = 6902 B Memory = 7206 B Memory = 7638 B Memory = 8198 B Memory = 8886 B

SRAM = 544 B SRAM = 848 B SRAM = 1280 B SRAM = 1856 B SRAM = 2560 B

Time = 3.9+0.03 Time = 7.3+0.03 Time = 11.1+0.07 Time = 15.8+0.08 (out-of-range)

ms ms ms ms

Table VI.

Decision trees and RF generated by sklearnporter and emlearn.

Library/ Decision Tree Decision Tree Decision Tree Decision Tree RF Estimators

Algorithm Depth = 3 Depth = 4 Depth = 5 Depth = 6 = 10

sklearnporter Accuracy = Accuracy = Accuracy = Accuracy = 1 Accuracy = 1

0.9155 0.9155 0.9935 F1 = 1 F1 = 1

F1 = 0.8886 F1 = 0.8886 F1 = 0.9935 Memory = 3770 B Memory = 27814 B

Memory = 3610 B Memory = 3670 B Memory = 3730 B Time = 48.75±5.6 Time = 252.24±7

Time = Time = Time = μs μs

40.56±0.63 μs 45.28±1.55 μs 48.32±9.48 μs

emlearn Accuracy = Accuracy = Accuracy = Accuracy = 1 Accuracy = 1

0.9155 0.9155 0.9935 F1 = 1 F1 = 1

F1 = 0.8886 F1 = 0.8886 F1 = 0.9935 Memory = 40016 B Memory = 13160 B

Memory = 3840 B Memory = 3904 B Memory = 3952 B Time = Time =

Time = Time = Time = 104.08±3.87 μs 193.6±6.06 μs

103.28±3.87 μs 103.58±7.18 μs 103.96±83.82 μs

16 IEEE CIRCUITS AND SYSTEMS MAGAZINE THIRD QUARTER 2020

Authorized licensed use limited to: Universidad Politecnica de Cartagena. Downloaded on August 18,2020 at 08:22:56 UTC from IEEE Xplore. Restrictions apply.V. Conclusions services, management of wireless mobile networks, green

The integration of ML within IoT devices will provide them networking techniques, and IoT/M2M architectures.

with a flexibility and processing capabilities never seen be-

fore. The TinyML paradigm proposes to adapt advanced Antonio Skarmeta received the B.S. de-

ML techniques to the constraints of MCUs in order to con- gree (Hons.) from the University of Mur-

vert them in real smart objects. In this work, a comprehen- cia, Spain, the M.S. degree from the Uni-

sive review and discussion of this novel and vibrating eco- versity of Granada, and the Ph.D. degree

system have been presented. The related challenges and from the University of Murcia, all in com-

opportunities have been identified as well as the potential puter science. He has been a Full Profes-

services that will be enabled by these intelligent small el- sor with the University of Murcia, since 2009. He has been

ements. The TinyML frameworks available in the market part of many EU FP projects and even coordinated some of

that currently permit the integration of complex ML mecha- them. He has published more than 200 international arti-

nisms within MCUs have been also reviewed and their ap- cles. His main interests include the integration of security

plicability has been discussed. Finally, a realistic use case services, identity, the IoT, 5G, and smart cities.

has been explored. Concretely, a multi-RAT smart object ar-

chitecture has been proposed and the ML integration pro- References

cess has been explained. A number of TinyML frameworks [1] L. Columbus, “10 Charts that will change your perspective of big

data’s growth,” Tech. Rep., 2018. [Online]. Available: https://software

have been employed for solving the problem of selecting

strategiesblog.com/2018/06/02/10 - charts-that-will- change -your

the most adequate communication interface considering -perspective-of-big-datas-growth/

both the status of the device and the characteristics of the [2] Y. Sun, M. Peng, Y. Zhou, Y. Huang, and S. Mao, “Application of ma-

chine learning in wireless networks: Key techniques and open issues,”

data to be sent. We have demonstrated that several classifi- IEEE Commun. Surveys Tut., vol. 21, no. 4, pp. 3072–3108, 2019. doi:

cation algorithms can be integrated within an Arduino Uno 10.1109/COMST.2019.2924243. [Online]. Available: https://ieeexplore

.ieee.org/document/8743390/

board for tackling this issue, namely, SVM, MLP, decision

[3] T. Baltrusaitis, C. Ahuja, and L.-P. Morency, “Multimodal ma-

trees, and RF. From the attained outcomes, we can conclude chine learning: A survey and taxonomy,” IEEE Trans. Pattern Anal.

that decisions trees and RF present the best performance Mach. Intell., vol. 41, no. 2, pp. 423 – 4 43, Feb. 2019. doi: 10.1109/

TPAMI.2018.2798607. [Online]. Available: https://ieeexplore.ieee.org/

in terms of accuracy, memory footprint, and classification document/8269806/

speed. Other algorithms also showed good results in terms [4] Q. Chen et al., “A survey on an emerging area: Deep learning for

of classification accuracy but their implementation should smart city data,” IEEE Trans. Emerg. Topics Comput. Intell., vol. 3, no. 5,

pp. 392–410, Oct. 2019. doi: 10.1109/TETCI.2019.2907718. [Online]. Avail-

be carefully addressed given the memory restrictions of able: https://ieeexplore.ieee.org/document/8704334/

the considered MCU. As future work, we plan to consider [5] G. Li, R. Gomez, K. Nakamura, and B. He, “Human-centered rein-

forcement learning: A survey,” IEEE Trans. Human-Mach. Syst., vol. 49,

other constrained devices in order to benchmark additional no. 4, pp. 337–349, Aug. 2019. doi: 10.1109/THMS.2019.2912447. [Online].

TinyML frameworks. Besides, other relevant aspects such Available: https://ieeexplore.ieee.org/document/8708686/

as decision safety and model correctness in sensitive fields [6] T. Luo et al., “DaDianNao: A neural network supercomputer,” IEEE Trans.

Comput., vol. 66, no. 1, pp. 73–88, Jan. 2017. doi: 10.1109/TC.2016.2574353.

of application, e.g., eHealth, will be considered. [Online]. Available: http://ieeexplore.ieee.org/document/7480791/

[7] B. Garbinato, R. Guerraoui, J. Hulaas, M. Monod, and J. H. Spring, “Per-

vasive computing with frugal objects,” in Proc. IEEE 21st Int. Conf. Advanced

Acknowledgment

Information Networking and Applications Workshops (AINAW’07), 2007, pp.

This work has been supported by the European Commis- 13–18. [Online]. Available: http://ieeexplore.ieee.org/document/4224076/

sion, under the projects IoTCrawler (Grant No. 779852), [8] C. Bormann, M. Ersue, and A. Keranen, Terminology for Constrained-

Node Networks, IETF RFC 7228, 2014.

CyberSec4Europe (Grant No. 830929) and Fed4IoT (Grant [9] M. S. Hadj Sassi, F. G. Jedidi, and L. C. Fourati, “A new architecture

No. 814918), and by the Spanish Ministry of Science, In- for cognitive internet of things and big data,” Proc. Comput. Sci., vol. 159,

pp. 534–543, 2019. doi: 10.1016/j.procs.2019.09.208. [Online]. Available:

novation and Universities, under the project PERSEIDES

https://linkinghub.elsevier.com/retrieve/pii/S1877050919313924

(Grant No. TIN2017-86885-R with ERDF funds). [10] R. Roman, J. Lopez, and M. Mambo, “Mobile edge computing, Fog

et al.: A survey and analysis of security threats and challenges,” Fu-

ture Gener. Comput. Syst., vol. 78, pp. 680–698, Jan. 2018. doi: 10.1016/j.

Ramon Sanchez-Iborra received the future.2016.11.009. [Online]. Available: https://linkinghub.elsevier.com/

B.Sc. degree in telecommunication engi- retrieve/pii/S0167739X16305635

neering and the M.Sc. and Ph.D. degrees [11] B. Martinez, M. Monton, I. Vilajosana, and J. D. Prades, “The power of

models: modeling power consumption for IoT devices,” IEEE Sensors J.,

in information and communication tech- vol. 15, no. 10, pp. 5777–5789, Oct. 2015. doi: 10.1109/JSEN.2015.2445094.

nologies from the Technical University [Online]. Available: http://ieeexplore.ieee.org/document/7122861/

[12] TinyML. 2020. [Online]. Available: https://www.tinyml.org/

of Cartagena, in 2007, 2013, and 2016, re- [13] C. MacGillivray and M. Torchia, “Internet of Things: Spending

spectively. He is currently an Assistant Professor and a trends and outlook,” Tech. Rep., 2019. [Online]. Available: https://www

Researcher with the Information and Communications .idc.com/getdoc.jsp?containerId=US45161419

[14] H. Doyu, “TinyML as a Service and the challenges of machine learn-

Engineering Department, University of Murcia. His main ing at the edge,” Ericsson, Tech. Rep., 2019. [Online]. Available: https://

research interests are evaluation of QoE in multimedia www.ericsson.com/en/blog/2019/12/tinyml-as-a-service

THIRD QUARTER 2020 IEEE CIRCUITS AND SYSTEMS MAGAZINE 17

Authorized licensed use limited to: Universidad Politecnica de Cartagena. Downloaded on August 18,2020 at 08:22:56 UTC from IEEE Xplore. Restrictions apply.You can also read